In the hospitality industry, storing information about guests and their stays is critical to tailoring offers to each guest. Information regarding the guest and the stay is stored in a system called the property management system at the hotel franchise’s corporate headquarters. The property management system stores information for each guest such as: (This is a sample list of attributes, not a complete list):

- Prefix (Mr., Mrs., Miss, Dr., etc.)

- Full name • Company name

- Physical address

- One or more e-mail addresses

- One or more telephone numbers

- Gender

The property management system stores information such as the following for each reservation that a guest makes (this is a sample list of attributes, not a complete list):

- Booked date

- Arrival date

- Departure date

- Folio status (booked, checked-in, checked-out, cancelled, no show, etc.)

- Property where the guest will stay

- How the reservation was made

- Promotion response code (this is the code that is captured if the reservation was made after receiving promotional e-mail such as providing a discount if a reservation is booked in the next 5 days)

- Room type booked

- Length of stay

- Method of payment

- Was the reservation an advance purchase

Property management systems can be setup with centralized or decentralized data storage architecture. Let’s discuss what each of these means to storing guest profile and stay data.

Centralized PMS Data Repository:

With this type of storage architecture, all of the guest profile and guest stay data is stored in one location. Therefore, the franchise properties for a particular franchise will access all of the guest profile and stay data from a single location. What does this mean? It means that this system:

- Reduces the amount of duplicate data entered as searching for a guest can be done against a single source

- Records all guest stay data to a single guest profile record

- Eliminates the need to cleanse the data by a data processing company

Decentralized PMS Data Repository:

With this type of storage architecture, the guest profile and guest stay data is stored in multiple locations. Each franchise property has its own storage and the data from each of these sources of rolls up to a single storage located at the franchise headquarters. This type of operating environment makes it very difficult to provide for the daily matching and merging of guest data to make guest data unique. Therefore, there is a definite need to cleanse the data and remove the duplicates by a data processing company. This can be done on a daily, weekly, or monthly basis. However, frequent cleansing may not be very cost effective. So, what are potential causes for the duplicated data?:

- There is no central database of guest profile data

- There is no visibility into guest stays at other properties

- Lack of a single unique identifier for the guest

While there are three main causes for duplicate data, there are also guest data anomalies/concerns that can contribute to the duplicate and inaccurate data. Here are some of the anomalies/concerns:

- Guest data inconsistently entered across properties

- Guest data from external sources (Hotwire, Priceline, etc.)

- Not providing guest personal data (i.e. generic address, generic email)

- Administrators at a company may be making the reservations for a group of people with different names and email addresses

- Front desk staff are not updating the correct guest profile information

- Data quality issues:

- Guest name example: first name was blank and last name was Hotel Beds (Wholesale reservation channel)

- Incorrect guest address, which impacts guest match rate

- Email address of hotel property entered instead of that of the guest, with insufficient guest profile information

- Operational Data Collection Issues:

- The field ‘Email’ is not required to be completed

- Mobile phone numbers are not in PMS with proper masking and numeric fields

- Guest data cleanup initiative is required

As one can see, a decentralized PMS architecture tends to have challenges around the uniqueness of guest profile data. The rest of this blog will focus on processes surrounding how to make a guest unique in an operating environment that has a decentralized PMS system.

The following section depicts an example solution created with Microsoft Dynamics CRM 2011 on making a guest unique in a decentralized PMS environment. This solution includes the following components and processes:

- PMS Database Tables: these are the tables in the PMS System that store the guest profile information such as name, address, e-mail, etc. and the reservations made by each guest

- Guest Profile:

- Definition: this is the record in CRM that stores basic information about the user and is a cumulative rollup of certain guest stay information

- The basic information stored is:

- First name

- Last name

- Physical address

- Zip code

- Telephone number(s)

- E-mail address(s)

- Opt-in

- Gender

- The cumulative rollup information stored is the following:

- What was the method used to book the last reservation?

- What is the frequent method of booking reservations?

- What is the total room nights stayed across all reservations?

- What is the total sum of revenue spent?

- What is the total number of stays?

- What was the last date stayed?

- Is the guest in-house or does he/she have a pending reservation?

- What was the average daily rate paid by the guest?

- What is the average length of stay?

- Guest Stay:

- Definition: this is the record in CRM that stores information about each guest stay. A guest stay record is created anytime a guest books a reservation at a particular franchise property. Therefore multiple guest stays can be associated to a single guest profile record

- The information captured on the guest stay form is:

- The date the reservation was booked

- The date the guest plans on arriving

- The date the guest plans on departing

- The franchise property where the guest will be staying

- The folio status (booked, checked-in, checked-out, cancelled, no show, etc.)

- The type of room booked

- The average daily rate for each particular stay

- The length of stay for each particular stay

- The method of payment used to book the reservation

- Was the reservation an advance purchase?

- Now that we know what the components are, lets discuss how these components are used in the following three processes:

- Daily loading

- Cleansing

- Reconciliation

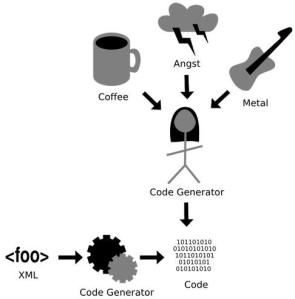

Figure 1: Guest Data LifeCycle

Daily Loading Process:

The first process we will discuss is the daily loading of the reservations. For each reservation loaded into Dynamics CRM, a guest profile record is created and a corresponding guest stay record is created which is associated to the guest profile record. For example, if John Smith makes two reservations (regardless of the reservation channel) for two rooms, he will have two guest profile records and two guest stay records in the PMS. Each guest stay record will be associated to one guest profile record. Since the data is stored in this manner in the PMS, it is loaded from the PMS into Dynamics CRM in the same way. This means that every time a guest makes a reservation, there will be a guest profile record and an associated guest stay record created for each reservation, even if the guest stays multiple times in a short period such as a month at a franchise property. This is referred to as un-cleansed data.

Cleansing Process:

On a regularly scheduled basis, such as a weekly or monthly period, the guest profile data from the PMS is compiled into a file and sent to a data processing company. The data processing company reviews for duplicates based on the following criteria:

- First name

- Last name

- E-mail address

- Physical address:

- Street 1

- Street 2

- City

- State

- Zip code

Reconciliation process:

(this can be weekly or monthly basis. For this discussion the reconciliation process will be monthly at the end of each month)

- Step 1: Once the duplicate records are removed, and the cleansed file is returned, the new guest profile data is loaded into a staging database and in this database all the cleansed guest profile records are associated to their guest stay records. For example, a file containing un-cleansed guest profile records for the entire month of January was sent to the data processing company. If there were two John Smith’s in the un-cleansed file, and then based on the above criteria, the two matching records will be compared and the one that has the most completed information would be kept. With the returned cleansed file containing only the one John Smith guest profile, the next step is to associate all the guest stays that John Smith had for the month of January to his cleansed guest profile record. For example, if John Smith stayed five times in the month of January, then all five stay records would now be associated with his cleansed guest profile record.

In a nutshell when the un-cleansed file is sent to the data processing company at the end of each month it, the data being cleansed is for the month that just ended. Once the cleansed file is returned with unique guests for the month, then the guest stays for the each of those guests for that particular month is associated to each unique guest profile record. - Step 2: Once step 1 is completed, then the identified unique guest profiles with their associated guest stays for the previous month are loaded into Dynamics CRM 2011. This is performed in two separate steps:

- First the unique guest profiles are loaded

- Then the guests’ stays are loaded and associated to the guest profile records

- Step 3: Once step 2 is completed, then the un-cleansed guest profile and guest stay data loaded in the previous month is purged. This process will only delete all the un-cleansed guest profile and guest stay data. The cleansed guest profile and guest stay data will remain in Dynamics CRM permanently.

As one can see, when a PMS has a decentralized storage architecture, there are some processes that need to be put in place to make a guest unique.

If the pressure to obtain and implement Customer Relationship Management software is any indication

If the pressure to obtain and implement Customer Relationship Management software is any indication